Every few years, someone publishes a piece arguing that leaf-spine is dead, overengineered, or about to be replaced by some newer topology. And every time, the data center networking community collectively shrugs and keeps building leaf-spine fabrics — because the fundamentals that made leaf-spine the dominant architecture haven’t changed.

That said, “leaf-spine is the right answer” is not the same as “any leaf-spine implementation is the right answer.” The architecture is sound. The details of how it’s implemented — underlay design, overlay choice, scale assumptions, operational tooling — matter enormously. And there are legitimate situations where alternatives deserve consideration.

This article is a pragmatic look at why leaf-spine with EVPN/VXLAN remains the standard, where the real implementation decisions are, and when you might reasonably look at something else.

Why Leaf-Spine Emerged

To understand why leaf-spine became dominant, you have to understand what it replaced.

The traditional three-tier data center architecture — access, distribution, aggregation — was designed for north-south traffic flows: clients reaching servers through a hierarchy of switches. Spanning Tree Protocol (STP) managed loop prevention by blocking redundant paths, which meant that at any given time, you were using only a fraction of your available bandwidth. Traffic climbed the hierarchy to reach the aggregation layer and descended on the other side, even when communicating between servers in the same row.

As virtualization became ubiquitous, east-west traffic — VM-to-VM, container-to-container, service-to-service within the data center — began to dominate. The three-tier architecture was badly suited for this. Traffic had to traverse multiple switch hops to get between adjacent servers, latency was unpredictable based on where traffic entered the hierarchy, and STP’s blocked paths meant expensive hardware was sitting largely idle.

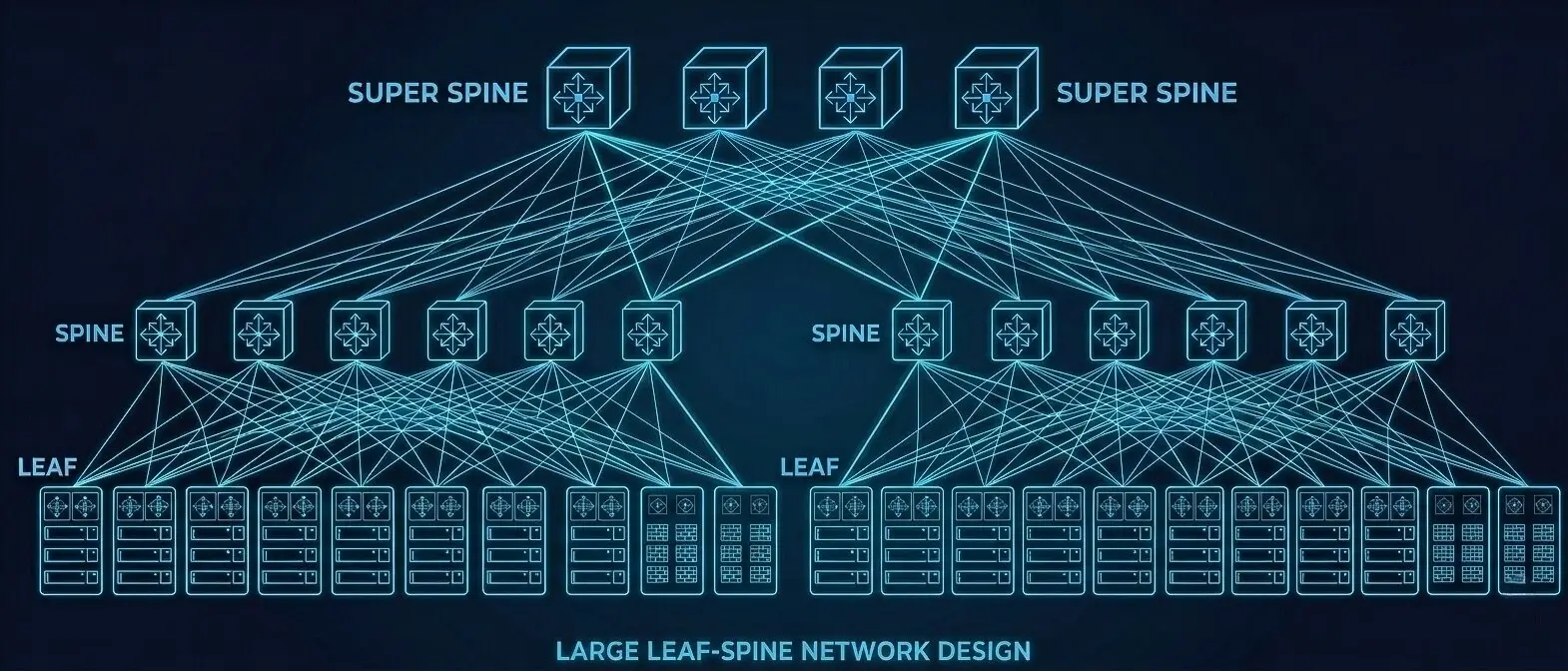

Leaf-spine solves these problems elegantly. Every leaf switch connects to every spine switch. Every server connects only to a leaf switch. The result is that any two servers in the fabric are always exactly two hops apart — leaf to spine, spine to leaf — regardless of physical location. Latency is consistent and predictable. All paths are active simultaneously via ECMP. Adding capacity is as simple as adding another spine (for oversubscription relief) or another leaf (for additional server ports).

EVPN/VXLAN: Why It Became the Overlay Standard

A pure leaf-spine topology solves the physical layer problem. It doesn’t, by itself, solve the problem of providing Layer 2 domains across a routed fabric — which you often need for workload mobility, legacy application requirements, or simply bridging across racks.

VXLAN (Virtual Extensible LAN) addresses this by encapsulating Layer 2 frames in UDP, allowing them to traverse a routed IP network. A leaf switch acts as a VTEP (VXLAN Tunnel Endpoint), encapsulating traffic from servers into VXLAN and forwarding it across the IP fabric to the destination leaf, where it’s decapsulated and delivered.

The early challenge with VXLAN was the control plane — specifically, how VTEPs learned about each other and the MAC addresses behind them. Early implementations used multicast or flood-and-learn approaches that didn’t scale cleanly. EVPN (Ethernet VPN), originally developed for MPLS-based service provider deployments, solved this by providing a BGP-based control plane for VXLAN. VTEPs advertise their MAC and IP bindings via BGP EVPN routes, eliminating the need for flood-and-learn and providing much cleaner behavior at scale.

The combination of VXLAN (data plane) and EVPN (control plane), running over a BGP-based IP fabric, is now the industry standard for modern data center overlays. It’s supported across all the major switching platforms — Arista, Cisco Nexus, Juniper, Nokia — and the interoperability story has matured significantly over the past several years.

The Underlay: Where Designers Get It Wrong

The overlay gets the most discussion, but the underlay — the routed IP fabric over which VXLAN runs — is where implementation decisions have the biggest operational impact.

The current consensus is to run eBGP as the underlay routing protocol, using private ASNs. This means each leaf switch has its own AS number, and each spine switch has its own AS number (or a shared spine AS, depending on the design). The elegance of this approach is that BGP’s path selection attributes can be tuned to ensure ECMP load-sharing works correctly, and the operational tooling around BGP is mature and well understood.

Some implementations use OSPF or IS-IS as the underlay IGP, which is perfectly valid and simpler in many ways — particularly in smaller fabrics where the additional flexibility of BGP isn’t needed. But eBGP underlay has become the dominant pattern at scale, particularly for fabrics that may grow significantly over time.

Common underlay design mistakes include:

Using a shared spine AS number carelessly. When all spines share an AS number and leaf switches use eBGP, you need to configure allowas-in or use AS path manipulation to avoid route filtering issues. Understanding exactly why is important before you deploy.

Ignoring BFD. BGP hold timers in the default configuration are slow — on the order of 90 seconds. Bidirectional Forwarding Detection (BFD) allows sub-second failure detection and is essential for fast convergence in production fabrics. It should be standard, not optional.

Underprovisioning spine bandwidth. Spines carry all inter-leaf traffic. If your leaf switches have 32 x 400GbE server-facing ports and 8 x 400GbE spine-facing uplinks, your oversubscription ratio is 4:1. That’s fine for general enterprise workloads but problematic for AI training traffic or other high-bandwidth east-west applications. Know your oversubscription numbers before you deploy.

When Alternatives Make Sense

Leaf-spine is the right architecture for mid-to-large data centers with meaningful east-west traffic. There are legitimate situations where simpler or different topologies are appropriate:

Very small environments. If you have fewer than 20–30 servers and no significant east-west traffic requirements, a simple collapsed core or even a pair of redundant top-of-rack switches may be perfectly adequate. Don’t overbuild.

Purpose-built AI clusters. For GPU clusters doing distributed training, some organizations are exploring rail-optimized topologies — where each spine handles a subset of the traffic (a “rail”) — to reduce the all-to-all contention that leaf-spine can exhibit at scale. This is a relatively advanced design pattern and adds operational complexity; for most organizations, a well-designed leaf-spine fabric is still the right starting point.

Campus-attached data center rooms. In environments where the “data center” is a server room attached to an office campus and workloads are largely north-south, a fully routed leaf-spine fabric may be architectural overhead. A simpler design with redundant aggregation switches may be more appropriate and easier to operate.

The Operational Reality

The most underappreciated aspect of leaf-spine with EVPN/VXLAN is the operational burden. This is not a set-and-forget architecture. BGP EVPN has a learning curve. VXLAN troubleshooting requires understanding both the overlay and underlay simultaneously. Network engineers who came up through traditional three-tier environments need training and hands-on experience to become proficient.

Organizations that deploy this architecture without investing in the operational knowledge to run it reliably will struggle. Vendor automation tooling helps — but it doesn’t eliminate the need to understand what’s happening underneath.

The architecture is sound. The technology is mature. The implementation and operational details are where the real work — and the real risk — lives.

Alan Sukiennik is the founder of Acton Pacific Strategies, a Las Vegas-based independent infrastructure advisory firm. He has 30 years of experience in enterprise and service provider networking, including senior engineering roles at Arista Networks, F5 Networks, BlueCat Networks, and Nokia. Reach him at alan@actonpacific.com or schedule a consultation.